Research themes

We conduct research at the leading edge of High Performance Computing and Data Science.

Application performance optimisation and development

EPCC has a long history of hosting and working with high performance computing systems, from local clusters to the UK national services. As a result, we have decades worth of experience optimising and fine tuning scientific applications so they can achieve the best possible performance for the hardware they are using. In addition, where necessary develop new, more scalable applications to replace legacy software (e.g. MONC). The aim of our applications performance research is to achieve maximum efficiency with the aim to deliver scientific results fast and accurately.

Examples of our applications research are:

- Met Office NERC Cloud Model

- Investigating applications on the A64FX

- Progressive Load Balancing in Distributed Memory

- Performance Evaluation of Adaptive Routing on Dragonfly-based Production Systems

Parallel programming models

EPCC has been at the forefront of the development of parallel programming models for more than 30 years, starting with our own CHIMP message passing interface in the early 1990s. Since then, EPCC has contributed to the development and success of the MPI and OpenMP standards processes.

The hardware architectures of supercomputers have been evolving, with an increasing trend towards heterogeneity, which has a direct impact on programmability. EPCC's research on parallel programming models for distributed, and potentially heterogeneous, systems explores how to exploit the compute resources most efficiently, for example by improving the interoperability of MPI and OpenMP with tasked-based models (such as OmpSs), CUDA and SYCL.

Examples of our parallel programming models research are:

- Driving asynchronous distributed tasks with events

- Compact Native Code Generation for Dynamic Languages on Micro-core Architectures

Parallel I/O performance

Contention for shared resources, such as the parallel file system, in a system can significantly impact application performance. EPCC is researching techniques to mitigate such performance bottlenecks and exploit novel hardware resources to match application demands in HPC.

The use of non-volatile memory for optimised I/O performance for HPC and Data Science applications is one area of research EPCC is pursuing. This research uses a new generation of byte-addressable persistent memory technology, called DCPMM, and a prototype cluster was developed as part of the EU-funded NEXTGenIO project. Research questions that are addressed including the different modes of operation for DCPMM (e.g. using it as slow high-capacity memory alongside DRAM or as very fast node-local storage in front of the parallel file system).

Examples of our I/O performance research are:

- An Early Evaluation of Intel’s Optane DC Persistent Memory Module and its Impact on High-Performance Scientific Applications

- Usage Scenarios for Byte-Addressable Persistent Memory in High-Performance and Data Intensive Computing

Machine learning

At EPCC, we examine the possibilities and opportunities presented by machine learning in a scientific environment—specifically pattern recognition and interpretation in large data sets.

This could mean anything from improving speed and reducing drilling risks in oil and gas exploration, to efficiently identifying anomalies in medical radiographic images.

Examples of our research on machine learning are:

- Machine learning on Crays to optimise petrophysical workflows in oil and gas exploration

- Using Machine Learning to reconstruction astronomical images

Novel architectures

EPCC investigates and assesses the viability of novel hardware and architectures at the leading edge of HPC. This has included working with the Met Office to explore using FPGAs in atmospheric modelling and weather forecasting, and exploring accelerating Nekbone on FPGAs.

Examples of our research on novel architectures are:

- Accelerating advection for atmospheric modelling on Xilinx and Intel FPGAs

- Application specific dataflow machine construction for programming FPGAs via Lucent

- Exploring the acceleration of Nekbone on reconfigurable architectures

- ePython - a tiny Python implementation for micro-core architectures

Quantum computing

EPCC’s Quantum Applications Group is exploring how quantum computing will impact HPC and Data Science. We are actively searching for applications of quantum computing, and investigating the required quantum and classical resources to achieve an advantage in computability, performance, or energy. We are also developing hybrid quantum and classical programming models, focusing on those which suitable for HPC. Finally, we are developing and improving our ability to simulate quantum computing using our classical HPC resources to better support quantum algorithm and application development.

We are collaborating directly with quantum computing experts across the UK through these organisations:

- Quantum Computing Application Cluster

A multi-disciplinary collaboration between the Universities of Edinburgh, Glasgow, and Strathclyde that is working to accelerate the development of quantum computing technologies in Scotland. - Quantum Software Lab

A dedicated Quantum Software Lab at the University of Edinburgh that works alongside the National Quantum Computing Centre to identify, develop, and validate real-world use cases for quantum computing.

Computational imaging

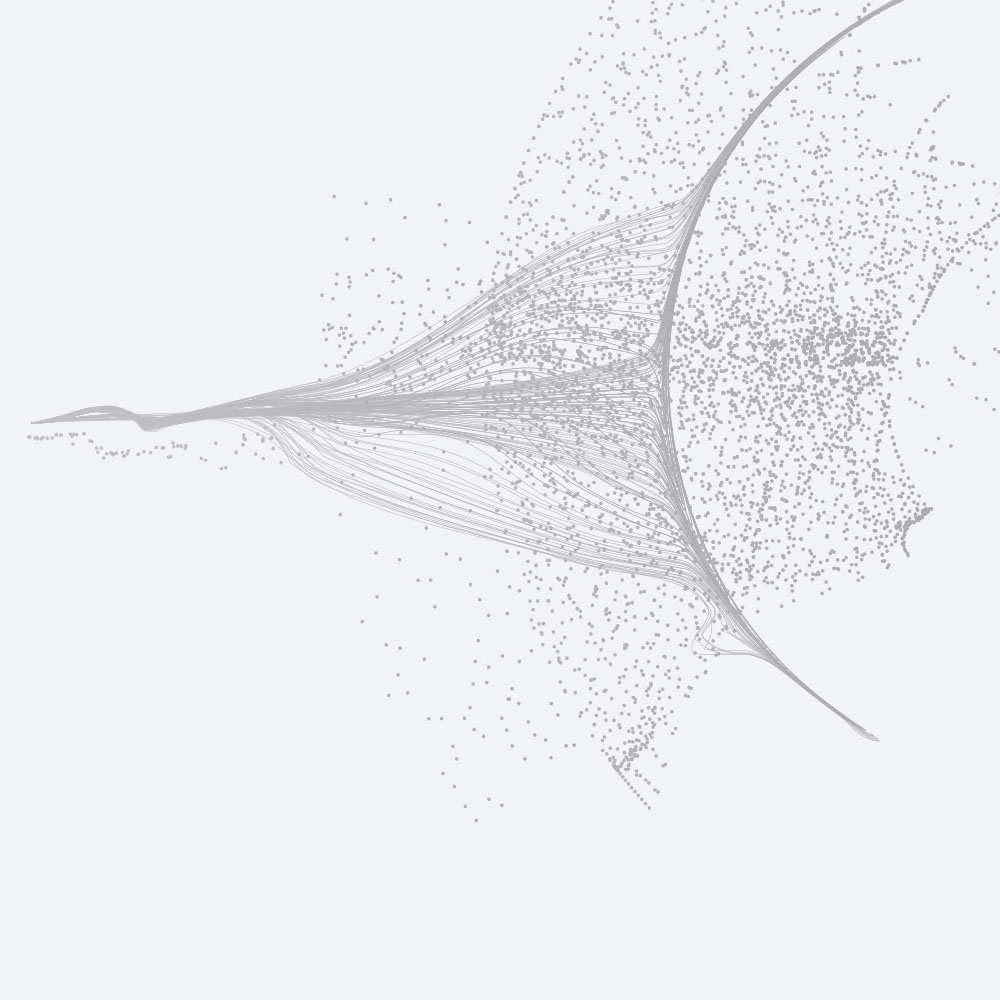

In collaboration with academics and researchers from medical imaging, radio astronomy and optimisation algorithm disciplines, we are working on applications and algorithms to generate high resolution, high precision, images from medical imaging and radio telescope datasets.

Applying novel optimisation approaches, built on the foundations of compressive sensing, allows faint signals to be resolved and high dynamic range images to be reconstructed. However, these approaches are computationally intensive and this, coupled with the increase in imaging data from next generation instruments such as the SKA telescope and 4D MRI machines, leads to very high requirements for computing resources or very long run times for reconstructions.

EPCC collaborations are addressing this issue, both by parallelising and optimising applications used for these tasks, and by applying techniques such as machine learning and dimensionality reduction to reduce the computational cost overall.

Examples of our research in computational imaging:

- Parallel faceted imaging in radio interferometry via proximal splitting (Faceted HyperSARA): II. Code and real data proof of concept

- Parallel faceted imaging in radio interferometry via proximal splitting (Faceted Hyper SARA)

- First AI for deep super-resolution wide-field imaging in radio astronomy: unveiling structure in ESO 137-006

Research software engineering

EPCC is one of the founding groups involved in research software engineering. As the lead site for the Software Sustainability Institute, it was instrumental in the development of research software engineering as a profession and, subsequently, as an area of research. EPCC collaborates with researchers from across the world on topics related to research software policy and practice.

Examples of our research in this area include:

- Understanding how the software used in research is developed and maintained, including which software engineering practices can be shown to be effective.

- How research software is recognised in scholarly communications, for instance the prevalence of software citation and software authorship, and the adoption of the FAIR for research software principles

- The role and use of software in different research communities through carrying out mixed methods landscape studies on “Software and skills for research computing in the UK” and “Understanding the software and data used in the social sciences”.

- Understanding Equity, Diversity and Inclusion Challenges Within the Research Software Community: examining evidence for the lack of diversity in the field, as well as highlighting areas where the community is becoming more diverse, and identifying interventions which could address challenges.