One tiny edge, two tiny edges... one trillion edges!

17 August 2023

Ludovic Capelli, Teaching Fellow at EPCC, lets us in on his PhD years.

During my studies, I attended the Centre for Doctoral Training in Pervasive Parallelism (CDT PPar), then directed by Professor Cole from the School of Informatics at the University of Edinburgh.

This four-year programme comprises a one-year Master of Science by Research (MScR), which acts as a transition to the world of research, followed by a three-year PhD. Before starting my PhD, I went to the National Institute of Informatics (NII) in Tokyo, Japan, for a six-month internship under the supervision of Professor Hu. The research topic was the investigation of a programming model called vertex-centric, used in graph processing.

Let there be vertex-centric

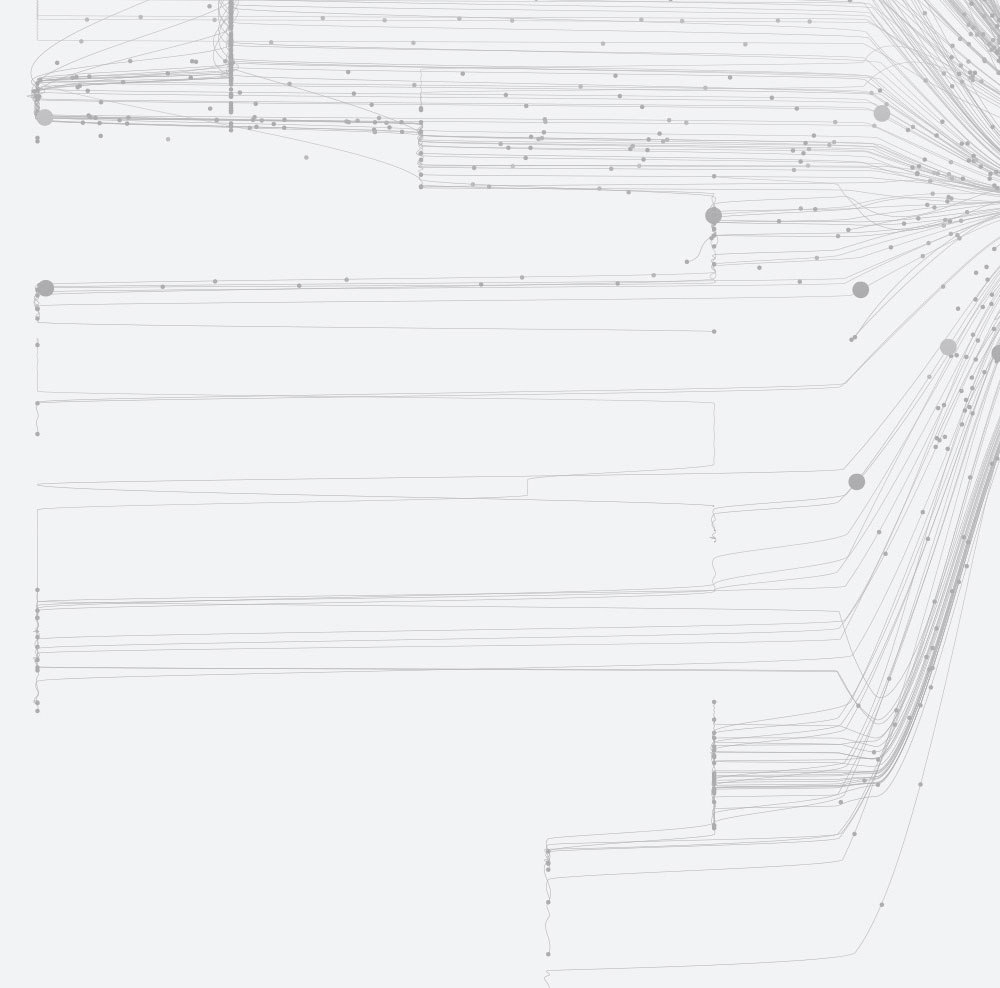

The field of graph processing has grown alongside the development of new approaches to graph processing applications due to the flexibility and applicability of the graph data structure (which is made of vertices linked with edges). One such approach is Google's Pregel framework, which introduced the vertex-centric programming model in 2010. This model expresses computation from a vertex perspective, performing inter-vertex communication via messages along incoming and outgoing edges.

Pregel's interface provides ease of programmability through a set of simple functions, enabling non-parallel programming experts to develop graph processing applications, while the associated challenges are offloaded to the framework. However, multiple sources of load imbalance, fine-grained synchronisation, and unpredictable memory access patterns make it challenging to implement the vertex-centric model efficiently on high-performance computing platforms without sacrificing programmability.

Advantages

Pregel's high-level programming interface, designed around a set of simple functions, provides ease of programmability to the user. The aim is to enable the development of graph processing applications without being exposed to low-level concerns, such as parallelism or optimisations, which typically requires expertise in high-performance computing.

These concerns are instead abstracted from the user and offloaded to the underlying framework. This allows the developer to focus on the algorithmic part, whilst HPC experts can focus on optimising the performance of the framework.

Challenges

However, fine-grained synchronisation, unpredictable memory access patterns, and multiple sources of load imbalance make it difficult to implement the vertex-centric model efficiently on high-performance computing platforms without sacrificing programmability.

Directions explored

This research focused on fusing vertex-centric and HPC, resulting in the development of a new shared-memory framework called iPregel, which successfully preserves both the programmer productivity benefits of vertex-centric while remaining competitive both in terms of performance and memory.

To extend single-node processing abilities, this research also explored the use of Non-Volatile Random Access Memory (NVRAM), where multiple versions of iPregel were developed to investigate various data movement strategies.

The research also investigated distributed-memory parallelism to address the memory limitation of a single node. The second framework developed, DiP, prioritises low-overhead performance comparable to that of iPregel rather than the highest scalability. Several buffer designs and network communication strategies were implemented in DiP to achieve this goal.

Outcomes

This research led to the design of new optimisation techniques, showcased through the shared-memory iPregel and distributed-memory DiP frameworks.

Shared memory

In the context of shared memory programming, different vertex-centric frameworks would expose variable performance, typically sacrificing vertex-centric abstractions, and thus degrading programmability; in return for additional gains in performance and memory efficiency. iPregel closes this gap, on both aspects, by several orders of magnitude.

The exploration of non-volatile memory allowed iPregel to process graphs of up to 750 billion edges, the largest graph processed on a single shared-memory node without the use of out-of-core computation, back in 2020.

Distributed memory

During the development of DiP, new limitations were encountered due to the size of the graphs processed, now gravitating around hundreds of billions of edges. As part of this investigation, new optimisation techniques were developed, such as a buffer design that allows certain vertex-centric operations to be embedded within communications.

Results gathered from experiments indicate that DiP demonstrates the ability to scale iPregel's competitiveness beyond single-node processing, ultimately reaching the biggest graph processed in this research, made up of 1.6 trillion edges.

Conclusions

Across all experiments, both frameworks proved to achieve the performance and memory efficiency gains presented while preserving programmability, which remains the key aspect of the vertex-centric programming model.

This research, available in my PhD thesis on the Edinburgh Research Archive, therefore demonstrates that by combining vertex-centricity and HPC, it is possible to maintain performance, memory efficiency and programmability.