Speeding up Python on ARCHER

21 May 2017

45 minutes is a long time for a computer: 2,700 long seconds. For a supercomputer like ARCHER that's a lot of time to spend getting ready to do work, but this is the problem faced by the firedrake team who we work with as part of the Marine Technology project.

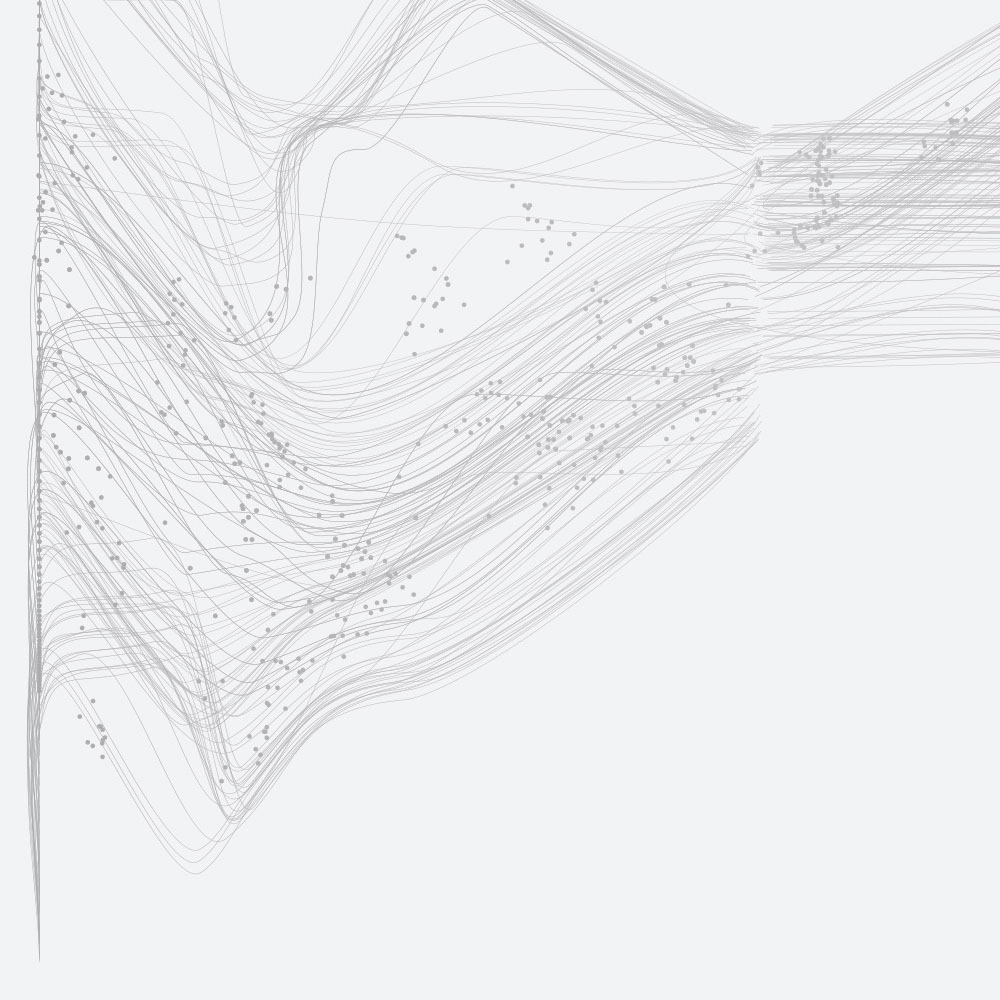

Firedrake is a Python-based, portable solution of partial differential equations using the finite element method. Running on ARCHER, it scales well in the computation phase, but not well at startup. Why? At startup, the Python interpreter parses its paths and loads a default set of modules. The first thing a Python code then does is load from disk code contained in multiple files. And therein lies the rub. Each Python interpreter loads from the same files on disk, serialising access, even on a parallel file system.

On 1000 nodes, this takes about 45 minutes for the 24,000 Python interpreters to go through this routine. That’s the time taken to import the module, before any computational work can begin.

There are a number of approaches to work around this problem, all of which seek to alleviate simultaneous congestion on the file-system: reduce node count, encapsulate firedrake inside a container, or move the environment closer to the compute and reduce disk access.

Node count cannot be reduced as we wish to employ the power to solve large problems, and encapsulation technologies require implementation at system level. Thus we explored the last solution in packaging the environment into a single archive which can be moved to the local (memory-based) storage on individual nodes.

Reduced congestion

Using the example above, this reduces the congestion from 24,000:1 to 24:1, the number of processes running on each node. The process of packaging a firedrake environment is a matter of:

- Copying the environment to a temporary location.

- Resolving symbolic links such that they are replaced with files.

- Changing the paths inside files to point to a new location.

- Creating an archive from this temporary copy.

The third point is important as we have to know the future path from which the environment will be used. On ARCHER this is usually the memory resident node-local storage, (/tmp), which gives fast access to the files.

A solution

To alleviate the complexity of doing this for users, we have created a Python module which provides a script that packages an existing environment suitable for deployment and resolves the specific pathing issues. The results are fairly impressive for a conceptually simple process. It’s available for download on github:

https://github.com/firedrakeproject/relocate-venv