News

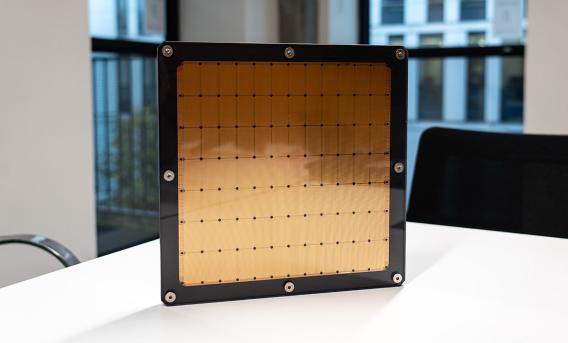

Chip and software breakthrough makes AI ten times faster

Drawing on the Cerebras CS-3 service operated by EPCC, a system has been developed that enables large language models (LLMs) to process information up to ten times faster than current AI systems.

High-Performance Computing Center Stuttgart and EPCC agree to strengthen collaboration

The partnership will build on a long history of cooperation between Stuttgart and Edinburgh to focus on supercomputing, modelling and simulation, artificial intelligence, and emerging computing tec

SC25 session: Building sustainable HPC outreach

As Eleanor Broadway explains, outreach is essential for growing and diversifying the HPC community by communicating the importance of our work to broader audiences.

Breaking I/O bottlenecks in scientific workflows: a new EPCC–University of Turin collaboration

Our new paper, “Overcoming Dynamic I/O Boundaries: a Double-Sided Streaming Methodology with dispel4py and CAPIO”, will be presented at WORKS 2025, part of the SC25 Workshops series.

A busy time at SC25!

Supercomputing (SC), the HPC community's largest conference, is held annually over the course of a week in a major US city, this year St. Louis.

Three cinematic EPCC visualisations at SC25

Three new scientific visualisations by EPCC will be presented at the "Art of HPC" sessions at SC25.

EBDVF 2025: meet us in Copenhagen

EPCC will be part of European Big Data Value Forum (EBDVF) in Copenhagen, from 12-14 November 2025.

NERC Doctoral Landscape Awards in Exascale Computing for Earth, Environmental, and Sustainability Solutions (ExaGEO DLA)

Applications are now open for 2026/2027 entry to ExaGEO PhDs.

ARCHER2 Celebration of Science 2026

Registration and the call for posters are now open for the ARCHER2 Celebration of Science 2026!

Join the Software Sustainability Institute's Research Software Camps

The Software Sustainability Institute (SSI) runs free online Research Software Camps (RSCs) once a year over the course of two weeks.